Fun Ways to Build Kinesthetic Awareness: Unlocking the Power of Kinesthetic Awareness in Phonemic Sensitivity

Are you looking for engaging ways to build kinesthetic awareness of sounds in learners? Here are a few fun activities to foster kinesthetic awareness of sounds, empowering learners to distinguish and produce sounds correctly and confidently.

The Red Breve

Apr 17, 2024

Summer Reading Activities: Fun in the Sun with Literacy!

Are you looking for fun ways to practice reading and writing skills over the summer? Although reading books is always great, here are some more active ways to engage your students in literacy over the summer.

Apr 4, 2024

Fun Ways to Build Kinesthetic Awareness: Unlocking the Power of Kinesthetic Awareness in Phonemic Sensitivity

Are you looking for engaging ways to build kinesthetic awareness of sounds in learners? Here are a few fun activities to foster kinesthetic awareness of sounds, empowering learners to distinguish and produce sounds correctly and confidently.

Mar 12, 2024

Misconceptions About the English Language: How We Can Provide a More Complete Picture

Learning a more complete set of basic phonograms and learning accurate spelling rules is the most efficient route to mastering English. Instead of leaving so many exceptions, we have the tools to decode 98% of English words.

Dec 27, 2023

Beyond the Drill: Fun and Creative Phonogram Practice at Home

Whether you are teaching your child from home or supporting classroom instruction, consistent practice with a playful approach can be a highly effective way for students to internalize phonogram knowledge that allows them to fully unlock the written English language.

Nov 16, 2023

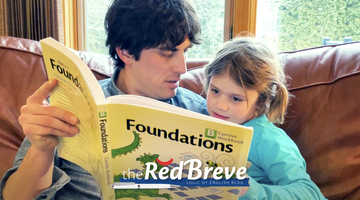

A Guiding Light in Early Education: Logic of English Foundations

Our journey with Logic of English Foundations has been nothing short of transformative. It transcended its role as an academic tool, fostering family bonding and turning each lesson into a shared adventure and each accomplishment into collective joy.

Oct 20, 2023

What is the Science of Reading?

The Science of Reading is a body of research that has been gathered for decades that sheds light on how people learn to read. This research focuses on the whys and hows of reading in the brain and how pathways are formed in the brain for fluent, successful reading.

Sep 7, 2023

Beyond the ABCs: Teaching Phonograms in the Early Years

The more I explored the ins and outs of how and why Logic of English teaches phonograms and spelling rules, the more I understood why my son was struggling, and better yet, how I could help him start connecting the dots.

Aug 15, 2023

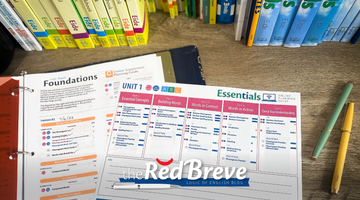

Back-to-School Planning: Using the Foundations and Essentials Planning Guides

My husband and I both work from home and both contribute to our children’s education. Because of this, planning is key to our homeschool success. The planning guides for Foundations and Essentials have taken the legwork out of my LOE planning process.

Jun 15, 2023

The Science of Reading and Early Childhood Literacy: Using Play and Games To Inspire Confidence and Curiosity

Early childhood is also an ideal time to introduce children to early decoding skills: phonemic awareness and systematic phonics. The goal is to spark their curiosity about written words, build a connection that all words are made up of sounds, develop an understanding that the letters on the page represent sounds and build their confidence that they will be able to decode the words to create meaning.

May 11, 2023

How To Guide a Summer Reading Camp Using the Essentials Reader

The phonograms and spelling rules will make a huge difference in students' ability to read and spell but if you approach it through fun and with doable expectations, you will also help students rebuild their self-confidence and hopefully reignite their love for reading and writing as well!

Apr 6, 2023

Three Ways To Help Develop Phonemic Awareness at Home and in the Classroom

For students to become strong readers and spellers, teachers must help them develop their phonemic awareness skills like blending and segmenting. Practicing blending words from an auditory prompt prepares students for reading success.

Mar 2, 2023

A Response to Sold a Story: A Parent’s Perspective

There is nothing like seeing the joy on my daughter’s face as she learns new sound after new sound and increasingly puts those sounds together to discover words that she recognizes.